AI Medical Chatbots: When Trusting Your Health to an Algorithm Can Be Fatal

Date: March 13, 2026

Reading time: 26 minutes

Emoji: 🤖

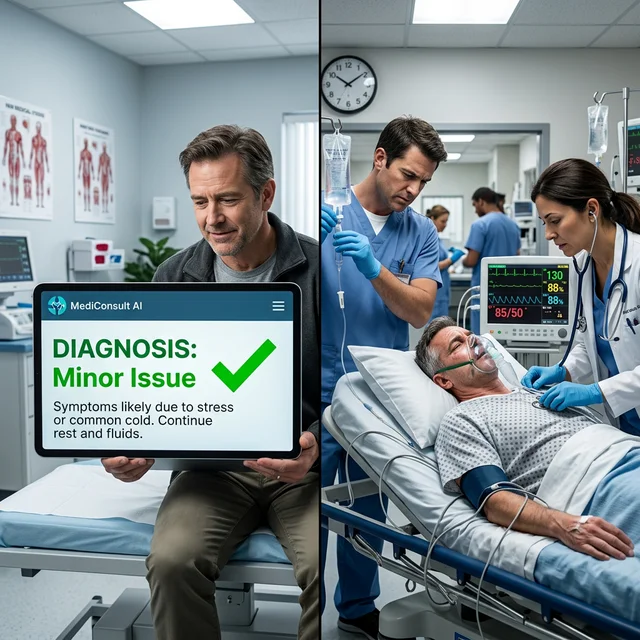

In January 2026, a 34-year-old woman in Ohio died after following the recommendations of an AI chatbot to treat what she believed was a persistent migraine. The chatbot — one of many free "AI medical consultation" apps — diagnosed her symptoms as "tension headache" and recommended rest and common painkillers. In reality, she was suffering from an undiagnosed cerebral aneurysm that ruptured 48 hours later. The family sued the app developer for negligence and wrongful death. This tragic case, far from isolated, exposes a silent epidemic spreading across the digitized world: hundreds of millions of people are replacing real medical consultations with interactions with algorithms trained on incomplete, biased, and frequently outdated data. This article investigates the real, documented, and emerging dangers of this trend, and why regulation — which should protect the public — is desperately behind.

The Medical Chatbot Boom: Alarming Numbers

A Revolution Without Oversight

The market for AI-based medical chatbots exploded after ChatGPT's launch in November 2022. By 2026, the landscape is dominated by a proliferation of apps promising "instant diagnoses," "AI second opinions," and "24/7 health consultancy." The numbers are impressive and concerning:

| Data Point | Value |

|---|---|

| Global AI medical chatbot users (2026) | ~450 million |

| Estimated daily consultations | ~15 million |

| Projected global market (2027) | $1.2 billion |

| AI diagnosis apps available (App Store + Play Store) | ~2,800 |

| Percentage with regulatory approval (FDA/EMA) | <3% |

| Error rate in complex diagnoses (independent studies) | 30-50% |

| Users who use AI as REPLACEMENT (not complement) for doctors | ~38% |

The most alarming figure is the last one: nearly 4 in 10 users use AI chatbots as complete substitutes for doctors — not as complementary tools. These users never consult a human healthcare professional to verify or validate the AI's "diagnosis." In developing economies, where access to medical care is limited and expensive, this proportion rises above 60%.

Why People Trust AI for Health

The massive adoption is driven by powerful socioeconomic and psychological factors:

Cost: A medical consultation in the US costs an average of $250-400 without insurance. An AI chatbot is free or costs $10-20/month. In Brazil, even in the public health system (SUS), wait times for specialists can be months.

Accessibility: Chatbots are available 24/7, in any language, without travel, waiting rooms, or appointments. For rural or remote populations, they may be the only "consultation" available.

Shame and stigma: Conditions like sexually transmitted diseases, mental health issues, and digestive problems carry social stigma. Many people prefer to "ask the AI" rather than face the perceived embarrassment of an in-person consultation.

Illusion of competence: LLMs like GPT-4 and Claude are extraordinarily convincing in their responses. They articulate medical information with fluency, confidence, and accessible language that many human doctors cannot match. This fluency creates a dangerous illusion of clinical competence.

Documented Dangers: When AI Fails Fatally

Incorrect Diagnoses: The Most Direct Threat

Studies published in 2025 and 2026 reveal concerning error rates in the most popular medical chatbots:

Harvard Medical School Study (2025): Researchers submitted 400 clinical scenarios to 8 popular AI chatbots. Results were alarming:

- Correct primary diagnosis: 52-74% (varying between chatbots)

- Correct diagnosis in critical emergencies (heart attack, stroke, pulmonary embolism): 38-65%

- Correct triage recommendations ("seek emergency" vs. "can wait"): 48-71%

- "Medical hallucinations" (completely fabricated information): Detected in 12-26% of responses

The study concluded that "no current AI chatbot is safe for use as an autonomous diagnostic tool, and its unsupervised use represents significant risk to public health."

BMJ Digital Health Study (January 2026): Analyzed 1,200 real patient interactions with AI chatbots across 6 countries. Found that:

- In 23% of cases with serious symptoms requiring urgent attention, the chatbot recommended "monitoring at home"

- In 15% of cases, the chatbot suggested medications that interacted dangerously with the patient's current medications

- In 8% of cases, the chatbot confused life-threatening condition symptoms (like pulmonary embolism) with benign conditions (like anxiety)

Documented Cases of Harm

At least 12 deaths and hundreds of hospitalizations have been publicly associated with incorrect AI chatbot diagnoses through March 2026. The most documented cases include:

- Ohio, USA (January 2026): 34-year-old woman — cerebral aneurysm diagnosed as tension headache. Death from rupture 48 hours later.

- Mumbai, India (November 2025): 52-year-old man — myocardial infarction diagnosed as gastroesophageal reflux. Death during AI-recommended "home treatment."

- London, UK (October 2025): 16-year-old teenager — appendicitis diagnosed as menstrual cramps. Appendiceal rupture and peritonitis. Survived after emergency surgery.

- São Paulo, Brazil (December 2025): 28-year-old woman — meningitis diagnosed as flu. ICU hospitalization for 12 days.

Algorithmic Bias: Coded Discrimination

When AI Doesn't See You

One of the most insidious problems with medical chatbots is algorithmic bias — the tendency of AI models to perform unequally across different demographic groups. This bias isn't accidental: it's a direct result of the data on which models were trained.

Most LLMs were predominantly trained with medical data from white, male, developed-country populations. This means AI may:

- Underestimate pain in Black patients: Studies demonstrated that AI models reproduce the historical bias of Western medicine in underdiagnosing pain in Black patients

- Miss atypical presentations in women: Heart attacks in women frequently present with symptoms different from "classic" ones described in medical texts. AI trained on these texts may not recognize these patterns

- Fail in developing country health contexts: Tropical diseases, malnutrition, and conditions prevalent in low-income populations are underrepresented in training data

Privacy and Data Security: The Hidden Cost

Your Medical Data Isn't Yours

When you chat with an AI chatbot about your symptoms, you're sharing some of the most sensitive and intimate information possible. Most medical chatbots operate in regulatory gray areas. Unlike hospitals and clinics, legally required to follow strict health data protection regulations (HIPAA in the US, LGPD in Brazil, GDPR in Europe), many AI apps classify themselves as "wellness tools" or "general information" to avoid these regulations.

In practice, this means your detailed conversation about depression symptoms, STD concerns, or family cancer history can be stored indefinitely on servers with unknown security practices, used to train future AI model versions, sold to data brokers, advertisers, or insurers, and accessed by hackers in case of a breach.

The (Absent) Role of Regulation

Regulation Can't Keep Up

The regulatory landscape for medical AI chatbots in 2026 is fragmented, confusing, and dangerously inadequate:

USA (FDA): Issued preliminary guidelines for "Software as a Medical Device" (SaMD) in 2023, but these were designed for traditional medical devices — not for generative LLMs that change behavior with each update.

European Union (EU AI Act): Classifies health AI applications as "high risk," requiring rigorous conformity assessments. However, practical implementation is slow.

Brazil (ANVISA): Published guides for software as medical devices, but enforcement is limited. AI chatbots operating in Brazilian Portuguese are widely available without any certification.

What Should Exist

Experts converge on recommendations: mandatory clinical validation, algorithmic transparency, obligatory disclaimers, reinforced data protection, clear civil liability, and regular bias auditing.

The Positive Side: When AI Actually Helps

Despite the dangers, AI has transformative potential in medicine — when used correctly under professional supervision:

- Emergency triage: AI algorithms help prioritize patients in overcrowded ERs

- Rare disease detection: AI can cross-reference thousands of symptoms and identify patterns a GP might miss

- Dermatology and radiology: Computer vision models demonstrate comparable or superior accuracy to human specialists in detecting melanoma and reading X-rays

- Mental health: Emotional support chatbots like Woebot demonstrate efficacy in reducing mild anxiety and depression — when used as complement, not substitute, for therapy

The problem isn't AI itself. It's AI without supervision, validation, regulation, and transparency about its limitations.

Practical Recommendations for You

- Never use chatbots as substitutes for doctors for new, persistent, or serious symptoms

- Verify ANY AI diagnosis with a human healthcare professional

- Be skeptical of reassuring diagnoses — AI tends to underestimate severity

- Don't share identifiable information (full name, SSN, insurance numbers)

- Prefer clinically validated chatbots with documented regulatory approval

- When in doubt, seek emergency care — no chatbot replaces a physical examination

Conclusion: The Responsibility Is Everyone's

The proliferation of medical AI chatbots won't reverse — the convenience and cost advantages are too powerful. But society must urgently answer: are we willing to accept hundreds of millions making potentially fatal health decisions based on clinically unvalidated algorithms operating without regulatory oversight? The answer should be an unequivocal "no." Use AI as a tool, never as a doctor.